GitHub has updated its Copilot coding assistant with new features including a “vulnerability filtering system” to block insecure coding patterns such as SQL injection or hard-coded credentials. Improved AI models and techniques have also increased the level of acceptance of suggested code, from 27 percent in June 2022, to 46 pecent today, and 61 percent for Java code.

The new features include an upgraded OpenAI Codex model, improved techniques for context understanding, and an updated client-side model which reduces the number of unwanted suggestions.

The vulnerability scanner uses LLMs (Large Language Models) to “approximate the behavior of static analysis tools,” according to the post by Senior Director of Product Management Shuyin Zhao. Although unlikely to be as rigorous as static analysis tools that run after the code is complete, picking up likely insecurities during the coding process means they are more likely to be fixed, without interrupting the coding flow.

Awkward issues remain over the potential for Copilot to copy public code without providing its license. The FAQ says that developers should take precautions which include “rigorous testing, IP scanning, and checking for security vulnerabilities. You should make sure your IDE or editor does not automatically compile or run generated code before you review it.” These precautions are potentially burdensome, and it is common for IDEs to compile and run code in the background to support intelligent error correction, making it hard for developers to comply. There is an optional filter which is intended to block copies of public code, but GitHub implies that it cannot be relied upon.

Despite these concerns, and related lawsuits, feedback from developers is reasonably good. A coder on Reddit backed the claimed percentages, saying that “after using Copilot for about 2 years daily, I can say these numbers reflect my personal experience. It depends on the kind of code you write of course, but there is just so much boilerplate that CP spits out effortlessly.” He added that Copilot also helps to avoid typos, write documentation, and come up with unit tests. Another described Copilot as “a really big time saver,” helping to avoid the need to stop coding in order to study documentation.

Some others are less positive. “Most of my experience with Copilot is hitting escape to avoid doing what it’s suggesting,” said one. No doubt the nature of the project and the extent to which it covers well-trodden ground is a factor in this. “It’s really good for rote stuff” said a developer on Hacker News; and developers do spend plenty of time on coding chores.

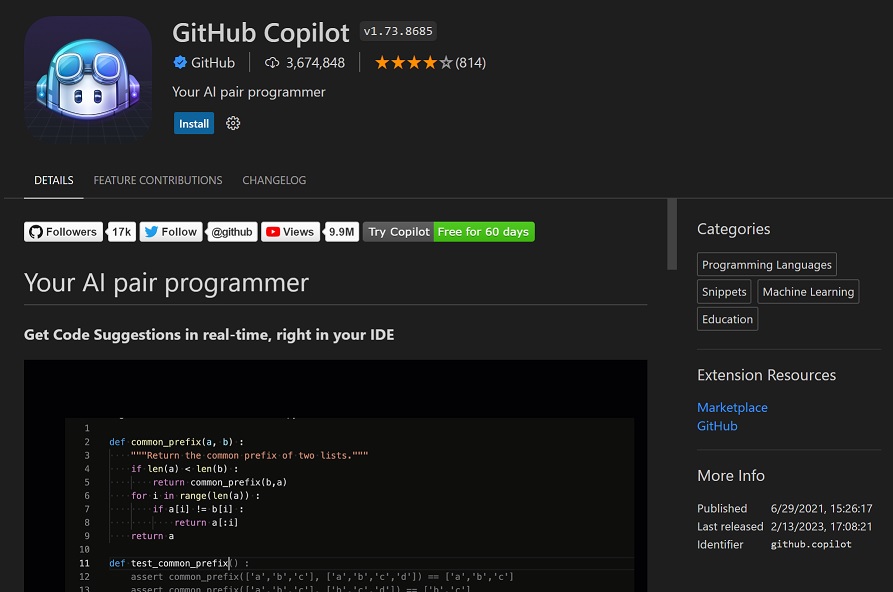

The natural home for Copilot may be Visual Studio Code, rather than Visual Studio. “Copilot for Visual Studio (i.e. not VS Code) is horribly broken … It isn’t the ‘AI’ side that is broken. It is just a buggy extension, crashes constantly, and even when it does give suggestions it takes far too long to do so harming your workflow,” said another dev, who is not alone judging by the many negative comments on the extension. By contrast, reviewers of the VS Code extension, which has over 3.5 million installs, give it a four star rating on average.

Copilot costs $10 per month for individuals, and $19.00 per user/month for businesses, with the business offer adding the ability to enforce organization policy; a thin benefit given that the price is nearly double. It does not take much developer time saved to pay for it many times over, the caveat being that the AI is only guessing at the purpose of the developer and is cable of generating code that is nearly but not quite right.