By Eberhard Wolff, February 22

Do you remember those guys that invented virtualization, founded a company, got rich and lived happily ever after, remembered fondly by all? Well, that’s almost the story of VMware. But we might see a different story with some of the people behind Docker.

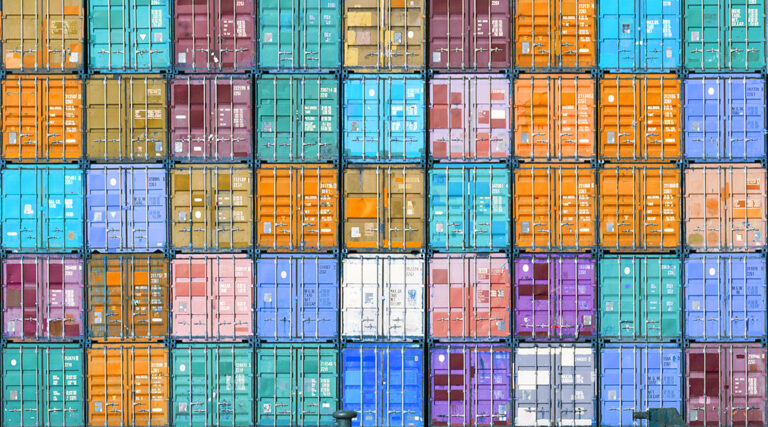

For several years, Docker has been a very important technology for my clients. Docker lets you run applications in containers, which, unlike virtualization, is much more lightweight.

A virtual machine simulates a complete computer running its own full operating system instance. Both the simulation of the computer and the large number of operating system instances require a lot of computing power. In contrast, Docker containers share only one operating system instance but have their own network interface and file system.

As a result, Docker achieves a very good container isolation. At the same time, a process in a Docker container consumes as few resources as processes running directly on the operating system. Docker achieves an efficiency that dwarfs that of virtualization. Running hundreds of Docker containers on a laptop is just not an issue.

Gaps creeping in

In addition, Docker solves another problem: installing software. Before Docker, developers had to use tools such as Chef or Puppet to install software reproducibly. A script defines the state the server should be in. From this, the tool derives the required installation steps – this is called idempotency. If no software is present on the server, the tool installs the software from scratch. If an old version is installed or part of the software is already available, the installation will be completed and updated. Unfortunately, the definition of the desired state is complicated and time-consuming. In addition, gaps easily creep in, because part of the state has not been defined.

Docker, on the other hand, uses its own images. The images are created by a script that includes every step of the installation from a bare server. Compared to an idempotent installation, the approach is much easier and guarantees a complete installation without any gaps. Behind the scenes, images use parts of other images. This saves disk space, and creating new images is incredibly fast because only the new parts are actually built.

Docker has profited greatly from good timing: the trend towards microservices means that a large number of applications must be operated efficiently. The low resource consumption and easy installation came just at the right time. And, of course, Docker also helps with the frequent deployments in modern continuous delivery pipelines.

Successful

That’s why an article at the end of last year (here) reporting the death of Docker might be greatly exaggerated. But the article is actually about something completely different: the fact that a successful technology does not necessarily mean commercial success for the inventor. Sun Microsystems invented Java, an important technology that became the foundation of a whole industry, but never profited much from it. In the end, Sun lost its independence and was taken over by Oracle.

Maybe this fate threatens the company behind Docker, Docker Inc. Docker itself is just a foundation. After all, it does not do much good if Docker containers run on a single server. If the server crashes, all the Docker containers will fail. Scaling is limited by the performance of a single server. Therefore, other systems build on top of Docker and allow Docker containers to run in a cluster of servers. They complement Docker with load balancing or failover and offer other important concepts specifically for microservices, such as service discovery. They have many features that are otherwise specific to enterprise virtualization. Such cluster container technologies are an important part of Google’s infrastructure and have proven to work well in such demanding environments.

Docker, Inc. provides Docker Swarm Mode as a solution for Docker in a cluster. It is a simple option, but it cannot compete with Kubernetes from Google and the Linux Foundation. That is because Kubernetes is more complex, but offers a complete solution. As a runtime environment for microservices, Kubernetes is currently becoming a de facto standard. It also has the support of all major cloud providers including Amazon, Microsoft or Google. Even Docker, Inc. will include Kubernetes in its Docker distribution. Docker, Inc. has a strong influence on Docker, but in regards to cluster technology it is switching to competitors’ offerings. Although Kubernetes started as a Google project, it now belongs to the Cloud Native Computing Foundation and is being developed openly. For users, this means more competition and less lock-in.

Docker and Kubernetes are very much alive and have become indispensable in the microservices and continuous delivery world. Container technologies are even beginning to take over the legacy of virtualization because they are more lightweight and proven in complex cluster environments that are needed for demanding applications. Additionally, Docker Images make software installation reliable and easy, and are a great alternative to traditional installation tools.

Anyone who still relies on classic virtualization or classic tools for installing software, and has not yet looked at Docker, has ignored an important trend. Whether or not Docker, Inc. earns money is a minor matter.

Eberhard Wolff has 15+ years of experience as an architect and consultant – often on the intersection of business and technology. He is a Fellow at innoQ in Germany. As a speaker, he has given talks at international conferences and as an author, he has written more than 100 articles and books about Microservices and Continuous Delivery. His technological focus is on modern architectures – often involving Cloud, Continuous Delivery, DevOps, Microservices or NoSQL.