Docker introduced Docker Debug today at its DockerCon event now under way in Los Angeles, as well as a “next-generation” cloud-assisted Docker Build and the general availability of Docker Scout, for finding vulnerabilities in software dependencies.

The problem addressed by Docker Debug is that when an application fails when running in a container, it can be hard to trace the problem. “When you shell into a container to try to explore, there’s no tools there for [developers],” Docker CEO Scott Johnston told DevClass. “What Docker Debug provides is an all-in-one tool set that is language independent. Developers can focus on problem-solving and not on setting up tooling for debugging.”

How does it actually work? Docker Debug is itself a container, but one that contains the developer’s debugging tools. “How it works is that the debugging container (toolbox) mounts the broken container filesystem,” a Docker spokesperson told us. “We do some other things like analyzing entry points, validating the architecture of the binaries of the entry point or the CMD, and provide a better UX around understanding what the problem might be.”

In order to create a filesystem that contains both debug tools and what is running in the container, Docker Debug uses the Nix tool to create a second filesystem with the tools (Nix is a package manager and system configuration tool). Then Docker Debug calls mergerfs to merge both filesystems (the original container plus the debug tools). “The result is a filesystem identical to the original container plus all the debugging tools,” we were told.

Johnston was keen to emphasize that developers do not need to tangle with these details directly. “It will seem like magic to the developer,” he told us.

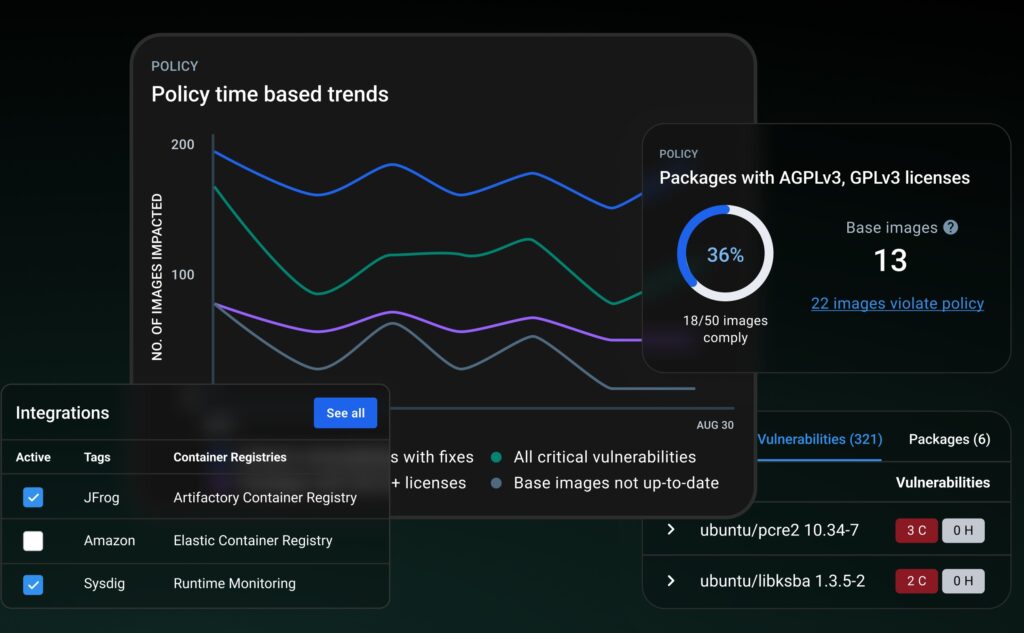

DockerCon also saw the general availability of Scout, previously in early access preview. Scout will find reported vulnerabilities in libraries used by an application, but is it needed when tools like GitHub’s Dependabot do the same thing? “We work with GitHub, not against GitHub, to give the developer a complete holistic view,” Johnston told us, adding: “One example is Sysdig.”

Sysdig is a third-party tool that integrates with Scout, which will “show what is actually being exercised at runtime, and uses that to help prioritize in the dashboard what’s relevant to the developer, because if it is not being exercised, it’s not going to put the developer at risk as much as those that are actually being run.”

A third key announcement was for a new generation of Docker Build. “We’re seeing that devs can spend up to an hour of their day per team member waiting for container image builds to complete,” said Johnston. “The reason is that Docker Build today is a local tool.” Now, a single switch to the command line for Build will offload it to the cloud. “We’re seeing [up to] 39 X speedups in builds local versus remote,” Johnston told us. This is not only because more power machines can be used, but also thanks to caching. “The team is more often than not using similar base images. So as soon as that image is cached, all the teammates benefit from that,” he said.

Is next-generation Docker Build just for development, or can it be part of a continuous integration (CI) process for deployment? “It initially targeted the dev loop but we’re also seeing it pulled into the CI loop,” Johnston said. For example, it can be called from GitHub Actions or GitLab Pipelines.

How is Docker doing? “We’re up to 20 million monthly active developers,” said Johnston. “From a commercial standpoint, we now have over 79,000 commercial customers.” Docker’s increased revenue – Johnston said in March that it increased by 30X in 3 years – comes partly as result of unpopular decisions such as changing Docker Desktop from a free to a paid-for product in 2021, and pushing Teams accounts towards more expensive Business subscriptions. The outcome though is more investment in Docker tools, as seen in today’s news.