Codeium, an AI assistant from Exafunction, has added multiple chat support in the just-released 1.4 version, and previewed a new feature called Command that generates code in the editor based on the developer’s instructions.

Multiple chats means that several conversations can be open in the Codeium side panel and developers can click between them, for example referencing suggestions from earlier chats. Another new feature in 1.4.1, released this week, is support for referencing classes in chat using @, adding to the existing support for referencing functions. These “chat mentions” direct the AI to the specific code to answer a question or suggest an improvement.

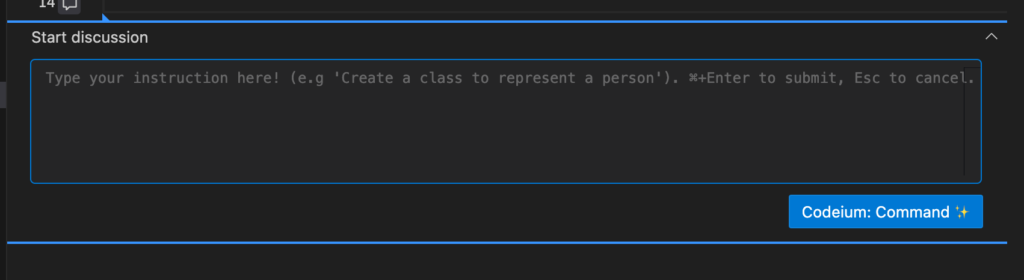

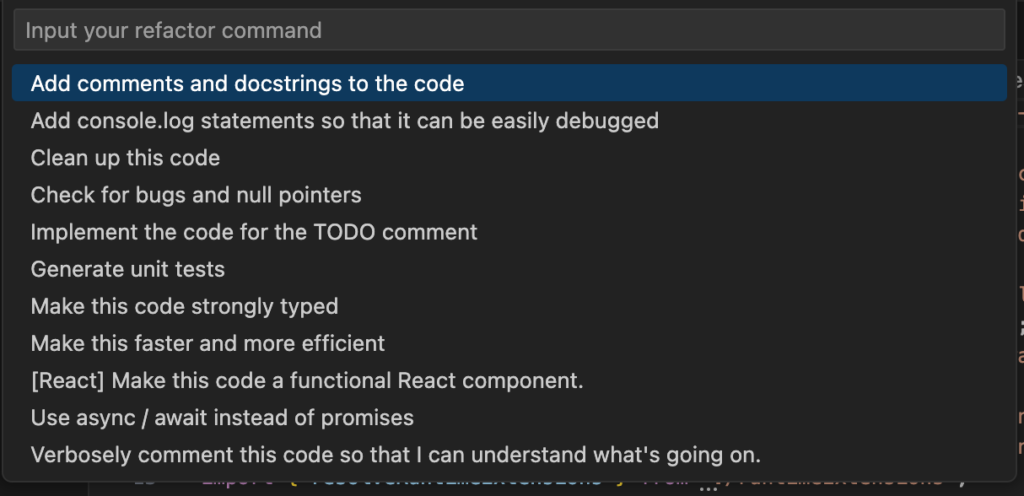

Command is a major new feature which presents an input box in the editor where developers can type instructions such as “add a category column to the product table” or “create a class to represent a person” or “Design a data structure for a binary tree.” Wording the input in order to achieve the best results is a skill in itself, as with any generative AI system. Codeium will generate the code, insert it into the editor, and add Accept and Reject buttons.

Codeium is less well-known than rivals such as GitHub Copilot, but unlike Copilot it is free for individual developers. The company states that its business model depends on enterprise licenses, and that there will always be a free version though it has limitations, such as rate limiting on the Command feature. Codeium is well-liked: the VS Code extension has over 360,000 downloads, five stars over 383 ratings at the time of writing, and many appreciative reviews. Codeium is developed by Exafunction, based in Mountain View, California, founded by Varun Mohan in 2021. Mohan formerly worked at the robotics company Nuro.

A huge range of over 70 programming languages is supported, though some better than others. The most common languages including Python, JavaScript, TypeScript, Java, Go and PHP support some features.

Regarding IDEs, VS Code and JetBrains IDEs have the best support, and are currently required for Chat, but other IDEs include Visual Studio, Jupyter Notebooks, Vim, Neovim, Emacs, Eclipse and Sublime Text.

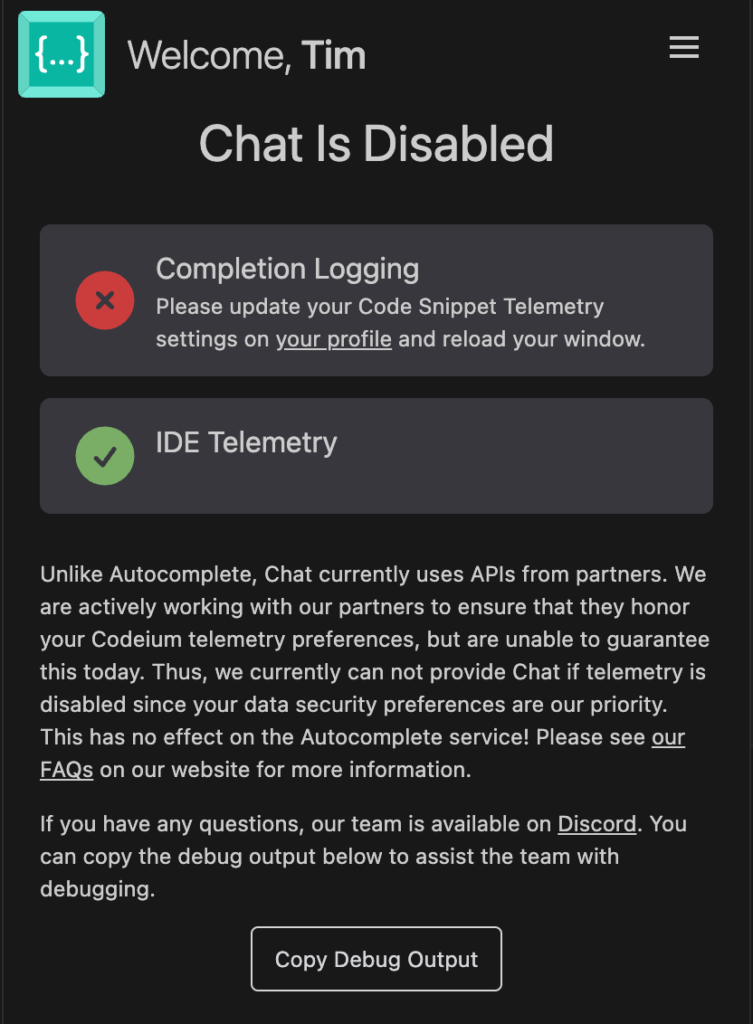

Is it safe to use though? Codeium makes a point of not using code with non-permissive licenses for training its models, including code that is GPL (GNU General Public) licensed. The cloud service does require code snippets and chat messages to be sent, but the company states that these are only used to “perform the model inference on our GPUs” and that it will never persist the data. There is an option for code snippet telemetry, on by default, but this can be turned off in settings, and the company states that all code is encrypted in transit and that it “never trains our generative autocomplete model on user code.” In addition, there is a self-hosted option for enterprises which means “all processing and data never leaves externally, even to Codeium.”

That said, we were presented with a message when trying the Chat feature stating that the feature uses “APIs from partners” and that the company was “unable to guarantee” that they would honor Codeium telemetry preferences. Therefore Chat does not work unless code snippet telemetry is enabled, other than for self-hosted customers where a different method is used. It is clear that the self-hosted option is the best solution for organisations nervous about code leaking somehow thanks to AI coding assistance.