Stack Overflow, the web site to which developers frequently turn for guidance on coding issues, said that its traffic drop, related to competition from AI assistants, has been exaggerated, though our analysis suggests it remains significant. The company has however mostly resolved its moderator strike, called over issues related to AI-generated content.

There have been various reports of traffic dropping including our own, as developers use the convenience of in-editor coding assistants to solve problems. The company now says the more extreme analyses, showing drops of 35 percent to 50 percent, were incorrect and caused by changes to its Google Analytics configuration.

“This year, overall, we’re seeing an average of ~5% less traffic compared to 2022,” claimed director of product management Des Darilek. Yet she also acknowledged that in April traffic was down by 14%, attributed to “developers trying GPT-4”, the OpenAI engine from the company that also powers GitHub Copilot. There is a decline therefore, even if some reports have exaggerated it.

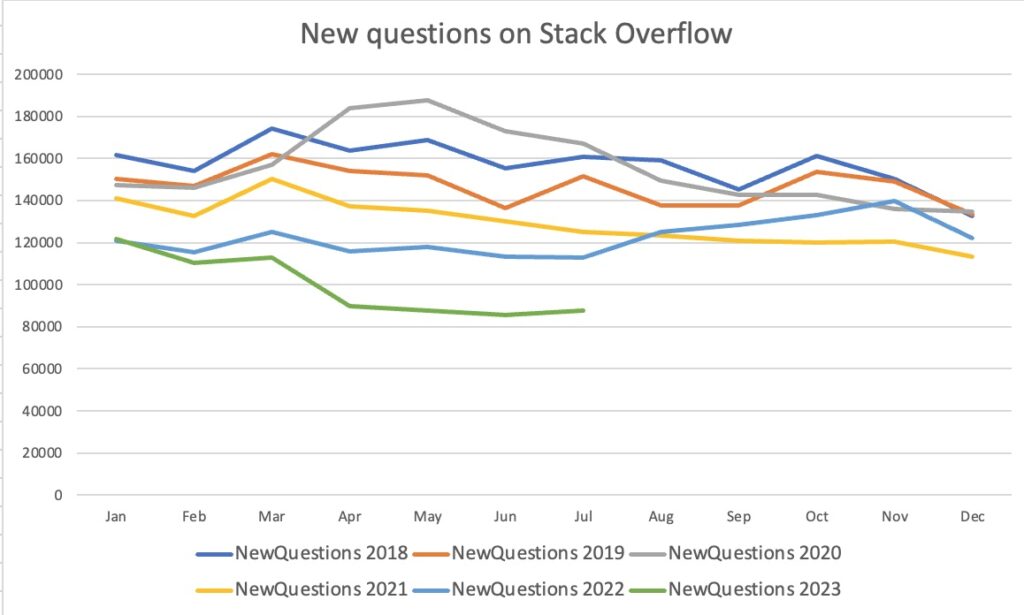

Another way to look at this is to count how many questions are asked. This report shows new questions dropping since last year; and we followed up with our own SEDE (Stack Exchange Data Explorer) query showing that April was not a one-off. Year on year, April was down by 22.5%, May 25.6%, June 24.5% and July 22.2%, by questions asked.

Stack Overflow’s hoped-for solution is a new service in preparation called OverflowAI, plus something perhaps more important, a Visual Studio Code add-in that could rival the convenience of Copilot – though its focus is different as it will not directly insert code.

The good news for the company though is that the moderator “strike” is mostly over and relations between the staff and volunteer moderators improved. The key issue, described in an open letter, was that moderators were restricted from removing answers to technical questions where the reason for removal was that they were AI-generated. The moderators argued that such answers are prone to “incorrect information and plagiarism.”

The possibility of incorrect answers is a problem inherent to generative AI, used by Open AI’s ChatGPT, Google’s Bard, and other such systems. Generative AI is strong in the sense of its ability to create fluent answers to the question posed, but there is no guarantee of correctness. The FAQ for GitHub Copilot, for example, states that “Public code may contain insecure coding patterns, bugs, or references to outdated APIs or idioms. When GitHub Copilot synthesizes code suggestions based on this data, it can also synthesize code that contains these undesirable patterns.”

The outcome of negotiations was that Stack Exchange (the company behind Stack Overflow) agreed to allow the removal of content based on “a single strong indicator of GPT usage, or on several weaker indicators.” The policy on this previously set by the company was made obsolete. Further, the company committed to continuing to maintain data dumps of subscriber content, API access and the Stack Exchange Data Explorer, though with the rider that it may “place guardrails around them to ensure that companies constructing language models, etc, are charged for access” – a condition that some community members regard as inappropriate given that data dumps are licensed under a creative commons license, CC by-SA 4.0, which allows adaption for any purpose subject to appropriate credit. In addition, there are changes to the way Stack Exchange communicates with moderators and a process for when Stack Exchange itself appears to have violated its moderator agreement.

This strike was not comparable to an employee strike, because the Stack Overflow moderators are volunteers; and in typical Stack Overflow style, the question of whether the strike was over was itself a matter of debate – though in practice it seems that moderation is back to normal.

AI is the common thread between the strike, the traffic, and the new services. Stack Overflow remains of huge importance to many developers, with its advantage over AI being the moderation and consensus of experts applied to its questions – provided they attract sufficient interest. That said, the sites struggle with AI and its implications look set to continue.